AI isn’t coming – it’s already here, quietly working behind the scenes, updating itself, and occasionally making decisions you didn’t realize you outsourced. In this episode, we unpack the chaos (yes, chaos) of modern AI adoption, especially when it sneaks in through third-party vendors and tools you already use every day. Think less “cool futuristic tech” and more “did we just pour gasoline on our existing risks?” If you’ve ever wondered whether you’re actually using AI… spoiler alert: you are. That is why the new Third-Party AI Risk and Supply Chain Transparency Guide from HSCC was created.

In this episode:

You Didn’t Invite AI – But Your Vendor Did – Ep 558

Today’s Episode is brought to you by:

Kardon

and

HIPAA for MSPs with Security First IT

Subscribe on Apple Podcast. Share us on Social Media. Rate us wherever you find the opportunity.

Great idea! Share Help Me With HIPAA with one person this week!

Learn about offerings from the Kardon Club

and HIPAA for MSPs!

Thanks to our donors. We appreciate your support!

If you would like to donate to the cause you can do that at HelpMeWithHIPAA.com

Like us and leave a review on our Facebook page: www.Facebook.com/HelpMeWithHIPAA

You Didn’t Invite AI – But Your Vendor Did

[00:40]AI wasn’t on your guest list… but they showed up anyway. Unfortunately, AI will be showing up in places you didn’t expect, sometimes being a perfect party guest and others… well not so much.

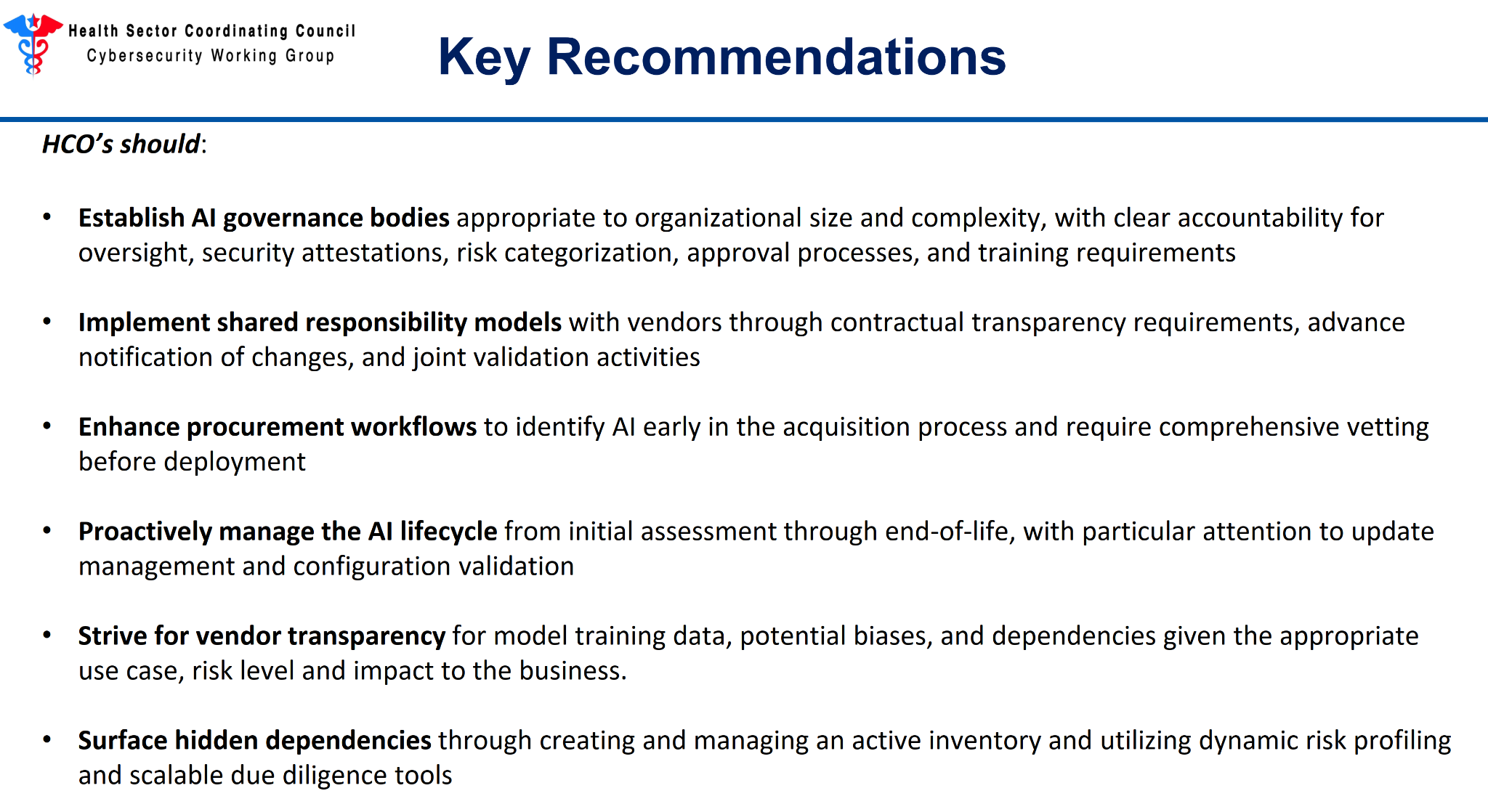

We all have to realize today that when your vendor uses AI you need to know what they are doing just like when you are using AI. Managing all the risk AI brings to the table is new to all of us. That’s why Healthcare Sector Coordinating Council (HSCC) Cybersecurity Working Group (CWG) has released the Third-Party AI Risk and Supply Chain Transparency Guide so we can build risk management plans for things we see and even the ones we don’t see right away.

Let’s just get started with saying we will not say that title over and over! Greg Garcia came up with the name 3PAIR and it works for us.

[07:09]Embrace the Chaos: AI Is Already in Your Vendor Stack

- Your vendor doesn’t have to sell AI to be an AI vendor

- EHR adds AI features

- Billing tools using AI for automation

- Support teams using AI copilots

- Security tools using AI detection

- AI embedded across clinical, operational, and infrastructure systems

- AI capabilities proliferating without formal oversight

When that happens we know privacy and security concerns aren’t even in the mix and can blow up in your face any minute – yes, any minute.

Why This Is a Problem (And Why 3PAIR Exists)

AI introduces risks traditional vendor risk management in the past doesn’t cover:

- Lack of visibility

- Hidden dependencies

- Vendors shifting risk

“Opacity in AI supply chains elevates systemic exposure and risk.” (page 5)

Basically, you can’t manage risk you can’t see. Or, our favorite – You don’t know what you don’t know until you know it.

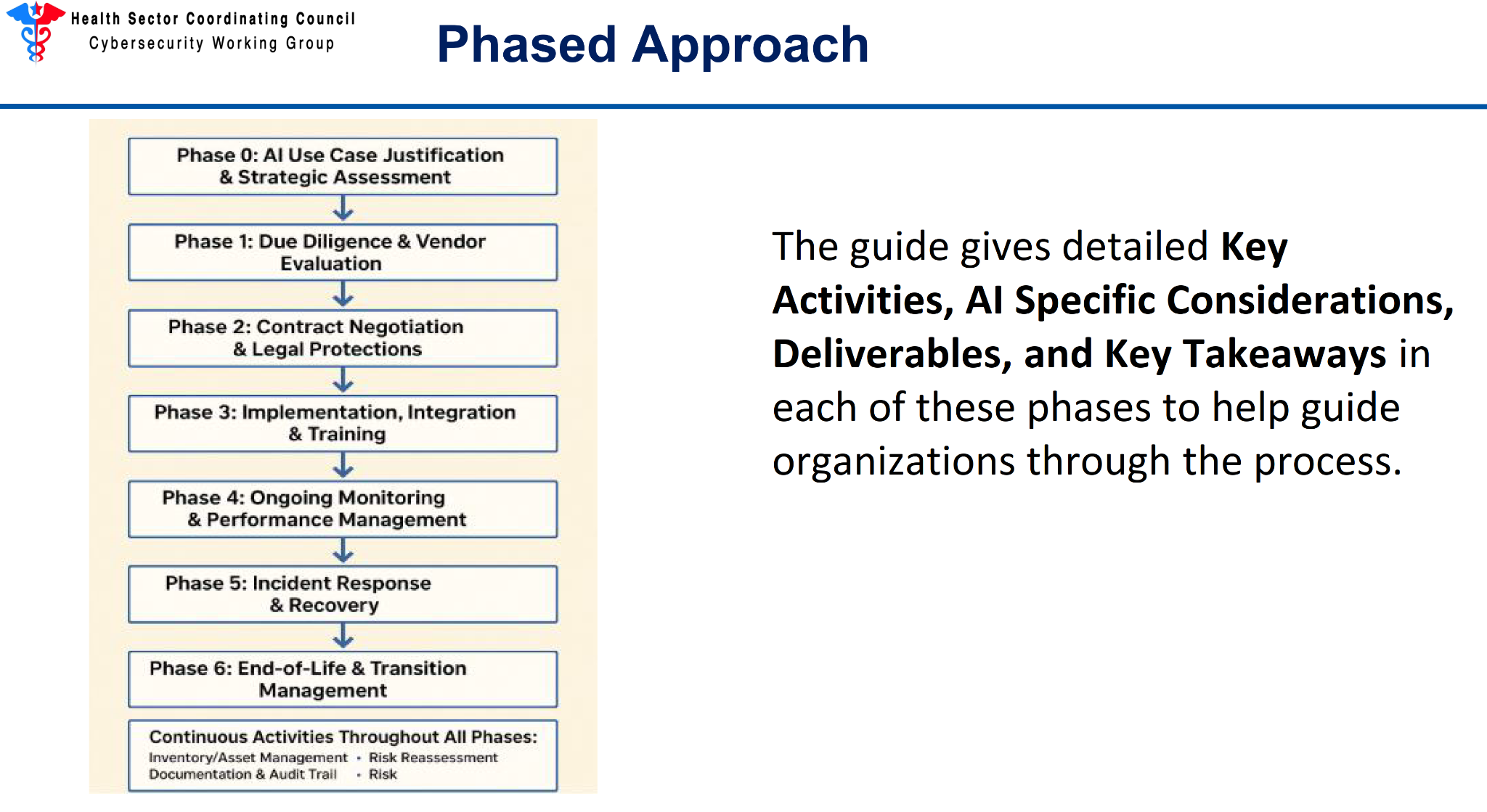

[14:23]The Big Idea: This Is a Lifecycle, Not a Checklist

The group came up with the concept of a phased approach to addressing vendor AI risk in the guide.

- Not linear

- Use what you need, when you need it

Simplify phases:

- Before you buy

- While you buy

- When you deploy

- While it runs

- When it breaks

- When it’s retired

Most orgs stop at vendor due diligence… and that’s not enough anymore.

The Mic Drop: You Might Not Need AI At All

“Not every problem requires an AI solution. This phase prevents unnecessary AI adoption and

ensures that when AI is pursued, there is clear strategic alignment and understanding of the risk profile before resources are committed to vendor evaluation.” (page 13)

SMB advice – well for everyone really:

- Avoid chasing AI just because it’s there

- Focus on value vs risk

Especially important when AI is being introduced indirectly through vendors you don’t know you are using it to solve a problem.

The “Plus One” Problem

You invited a vendor… They brought AI with them to the party.

And that AI:

- may use third-party models

- may rely on other vendors

- may change over time

Tying this to the guide:

“Limited visibility into AI components sourced through layered supply chains” (page 7)

It’s not just your vendor… it’s your vendor’s vendors you have to worry about in this new world.

[21:48]Contracts Are Now Security Controls

Key shift:

Contracts now must manage AI risk

Examples:

- No training on your data without consent

- Notification of AI changes

- Transparency requirements

“Standard software licensing agreements… are insufficient for AI systems.” (page 16)

This Isn’t Just IT – It’s Patient Safety

AI impacts:

- Clinical decisions

- Operations

- Outcomes

AI errors can be subtle, not obvious.

Risk isn’t always immediate.

AI failures can be gradual and hard to detect, but also devastating.

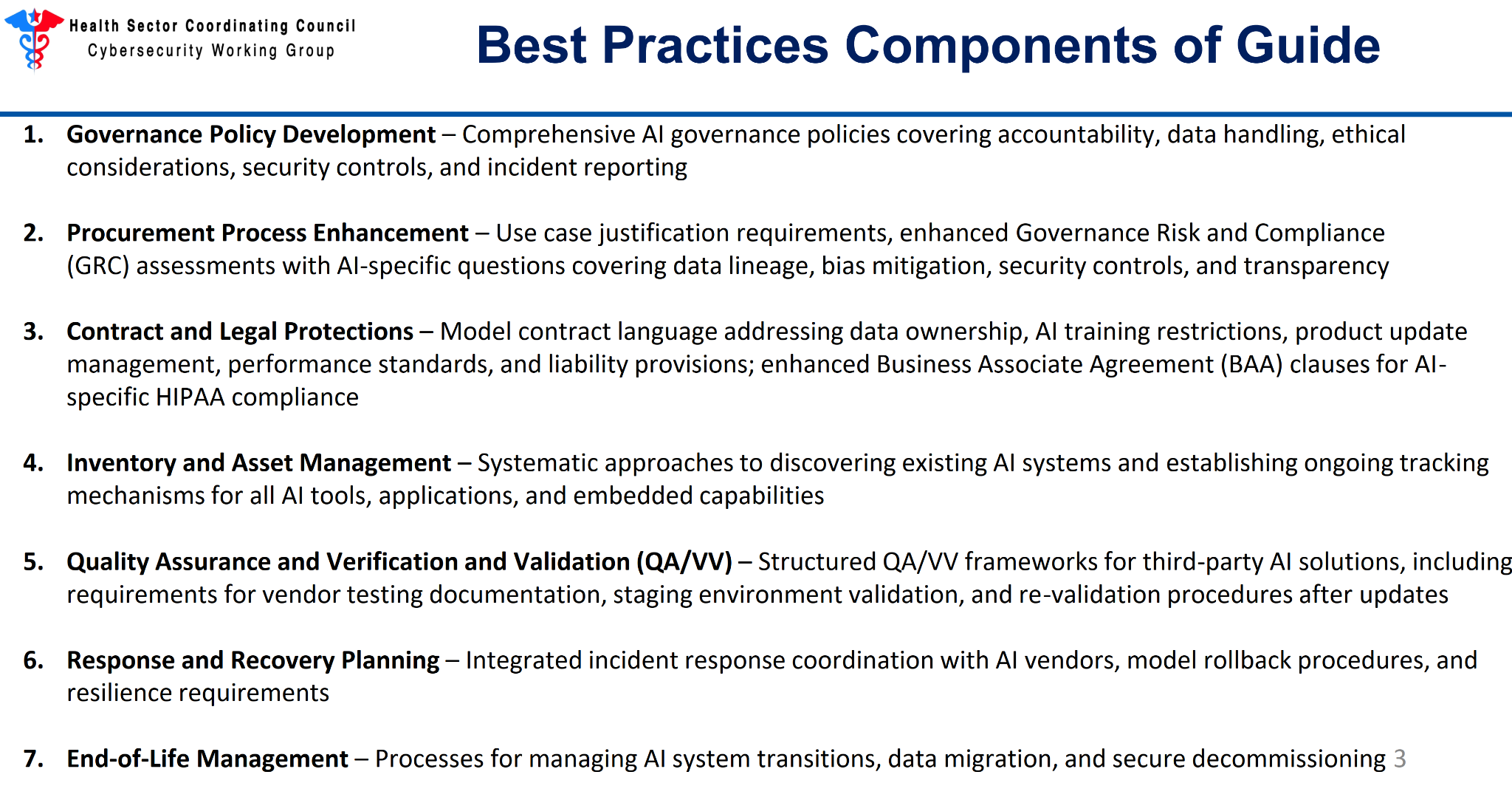

Bonus Resources

- Use Case Justification Template and Risk Level Definitions

- Sample AI Governance Policy

- Inventory Management Guidance

- RACI Matrix for identifying clear roles/responsibilities

- Sample Contract Language for both Commercial Contract and BAA

- AI Specific Vendor Assessment questions for Procurement and GRC

- User Training Completion Checklist and Complete Training Curriculum

- Quality Assurance/Verification/Validation Recommendations

At the end of the day, AI isn’t something you can avoid—it’s something you have to manage. It’s already embedded in your workflows, your tools, and your vendors, often evolving faster than your policies (and awareness) can keep up. The real risk isn’t just the technology itself—it’s the lack of visibility, control, and understanding of how it’s being used behind the scenes. The good news? You don’t have to solve everything overnight. But you do need to start asking better questions, demanding transparency, and putting guardrails in place. Because in a world where AI is moving fast and showing up everywhere, the worst strategy is pretending it’s not already part of your reality.

Remember to follow us and share us on your favorite social media site. Rate us on your podcasting apps, we need your help to keep spreading the word. As always, send in your questions and ideas!

HIPAA is not about compliance,

it’s about patient care.TM

Special thanks to our sponsors Security First IT and Kardon.